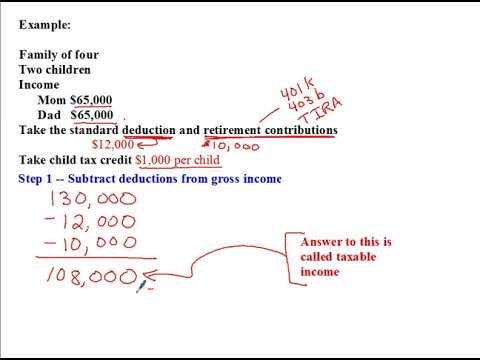

All right, welcome back to another podcast with Mr. Haggin. On this podcast, we're going to look at some practice problems, some examples of calculating income taxes. We're going to use a three-step process that I talked about in a previous video. Step 1 is subtracting away deductions, Step 2 is calculating taxes owed using a tax line, and Step 3 is subtracting away any tax credits. Finally, we'll calculate the average tax rate and the marginal tax rate at the end. Now, let's dive into an example. We have a family of four with two children. Mom earns $65,000 per year and Dad earns $65,000 per year. For this example, we will assume a standard deduction of $12,000 for a married couple filing jointly. Along with that, they also get a deduction for their retirement contributions. Let's say they decide to contribute $10,000 into their retirement accounts. These retirement accounts can be things like a 401k, a 403b, or a traditional IRA. They can invest the money in stocks, bonds, and other investments. The important thing for us right now is that they get a tax deduction for the money they put into these accounts. So, if they put $10,000 into retirement accounts, they get a $10,000 deduction on their taxes. Please note that the information regarding the tax laws mentioned here is based on the laws at the beginning of 2018 and may have changed since then.

Award-winning PDF software

1040 sample Form: What You Should Know

You could use a form such as the below if your household income is below the limit. If you owe no taxes, you would use the “Individual Income Tax Tables” on the Illinois Individual Income Tax Form: I.R.S. Form 1040A, Form 1040EZ, Form 1040NR, Form 1040SR, and Form 1045. If your income is above the limit, you would complete a standard Illinois 1040 and then fill out the form based on your federal income tax withholding. Use the information on your Form 5498 or Form 2103 or your federal tax return. A common tax withheld payment is 28% of your adjusted gross income. Form 1040, Schedule B. A Form 1040 with your federal return and state return. IRS.gov Get a copy of your tax return. Get an Income Tax Return to the IRS.gov Website. For an example of filing Form 1040, see Form 1040 Tax Return, Example (Form 1040NR). Get a copy of your state-employee W-2s. Get a copy of W-2s by clicking this link. Filing online with Illinois' state income tax return is very fast and easy! (Form) (Form 1120.00.) See the online guide and sample for Form 1120 (PDF). Fill out Form 1040, Schedule E, Part I. Pay your state income taxes on time. Get instructions at the IRS.gov website. Get Form 540-T. Pay income taxes from Form 540. You do not have to fill out Form 560-T. (PDF) Get a copy of your federal income tax Return. Form 540-T, Individual Income Tax Return. PDF Form 540-T, Federal Income Taxes and Other Information. (PDF) Get an Intraday Money Order for your federal tax payment. Send payment by mail or fax to the following address: Illinois Department of Revenue, PO BOX 100, 601 W. Green lawn Avenue, Peoria, IL 6. Mail your money order to the following address: U.S. Department of the Treasury, Internal Revenue Service, Office of Tax and Investment Services, PO Box 790290, Washington, DC 20. You can download and print the following instructions: IRS, Paying Tax Online (PDF). Form 540 and Form 706 (PDF).

Online solutions help you to manage your record administration along with raise the efficiency of the workflows. Stick to the fast guide to do Form instructions 1040-a, steer clear of blunders along with furnish it in a timely manner:

How to complete any Form instructions 1040-a online: - On the site with all the document, click on Begin immediately along with complete for the editor.

- Use your indications to submit established track record areas.

- Add your own info and speak to data.

- Make sure that you enter correct details and numbers throughout suitable areas.

- Very carefully confirm the content of the form as well as grammar along with punctuational.

- Navigate to Support area when you have questions or perhaps handle our assistance team.

- Place an electronic digital unique in your Form instructions 1040-a by using Sign Device.

- After the form is fully gone, media Completed.

- Deliver the particular prepared document by way of electronic mail or facsimile, art print it out or perhaps reduce the gadget.

PDF editor permits you to help make changes to your Form instructions 1040-a from the internet connected gadget, personalize it based on your requirements, indicator this in electronic format and also disperse differently.

Video instructions and help with filling out and completing 1040 sample